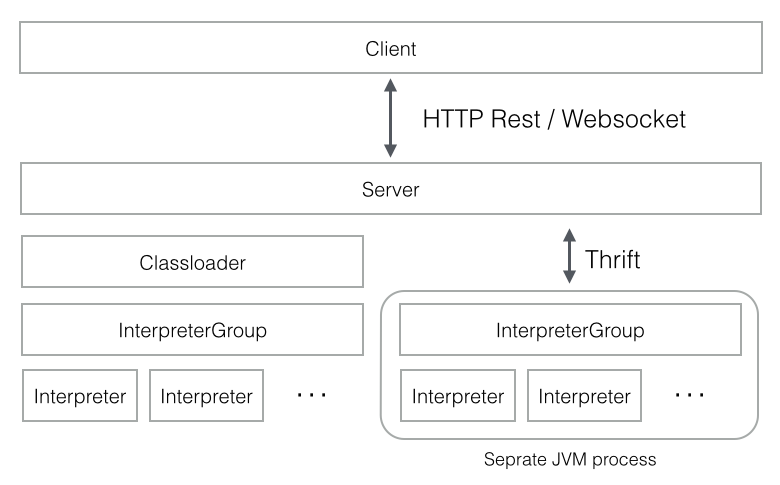

我目前擁有在HDP 2.5的主服務器上運行的最新zeppelin源代碼,我還有一個工作服務器。Zeppelin擴展了多個JAVA進程

在主服務器下,我檢測到最近12天內正在生成的幾個JAVA進程,它們沒有完成並正在佔用內存。在一段時間裏,記憶變得充實,無法在其紗線隊列下運行Zeppelin。我在Yarn中有一個隊列系統,一個用於JobServer,另一個用於Zeppelin。 Zeppelin目前正在以root運行,但會更改爲每個自己的服務帳戶。系統是CENTOS 7.2

該日誌顯示以下過程,爲了便於閱讀我開始區分它們: 過程1到3似乎是齊柏林,我不知道過程4和過程5是什麼。 這裏的問題是:是否有配置問題? zeppelin-daemon爲什麼不殺死這個JAVA進程?有什麼可以解決這個問題?

<p><strong>PROCESS #1</strong>

/usr/java/default/bin/java

-Dhdp.version=2.4.2.0-258

-cp /usr/hdp/2.4.2.0-258/zeppelin/local-repo/2BXMTZ239/*

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes/

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes/

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes/

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

:/usr/hdp/current/spark-thriftserver/conf/:/usr/hdp/2.4.2.0-258/spark/lib/spark-assembly-1.6.1.2.4.2.0-258-hadoop2.7.1.2.4.2.0-258.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-api-jdo-3.2.6.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-core-3.2.10.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-rdbms-3.2.9.jar

:/etc/hadoop/conf/

-Xms1g

-Xmx1g

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-spark-root-cool-server-name1.log org.apache.spark.deploy.SparkSubmit --conf spark.driver.extraClassPath=::/usr/hdp/2.4.2.0-258/zeppelin/local-repo/2BXMTZ239/*:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/*:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

--conf spark.driver.extraJavaOptions=

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-spark-root-cool-server-name1.log

--class org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer

/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar 44001

</p><p><strong>PROCESS #2 </strong>

/usr/java/default/bin/java -Dhdp.version=2.4.2.0-258

-cp /usr/hdp/2.4.2.0-258/zeppelin/local-repo/2BXMTZ239/*

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes/

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes/

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes/

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

:/usr/hdp/current/spark-thriftserver/conf/

:/usr/hdp/2.4.2.0-258/spark/lib/spark-assembly-1.6.1.2.4.2.0-258-hadoop2.7.1.2.4.2.0-258.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-api-jdo-3.2.6.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-core-3.2.10.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-rdbms-3.2.9.jar

:/etc/hadoop/conf/

-Xms1g

-Xmx1g

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-spark-root-cool-server-name1.log

org.apache.spark.deploy.SparkSubmit

--conf spark.driver.extraClassPath=

:

:/usr/hdp/2.4.2.0-258/zeppelin/local-repo/2BXMTZ239/*

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

--conf spark.driver.extraJavaOptions=

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-spark-root-cool-server-name1.log

--class org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer

/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

40641

</p><p><strong>PROCESS #3</strong>

/usr/java/default/bin/java

-Dhdp.version=2.4.2.0-258

-cp /usr/hdp/2.4.2.0-258/zeppelin/local-repo/2BXMTZ239/*

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes/

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes/

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

:/usr/hdp/current/spark-thriftserver/conf/

:/usr/hdp/2.4.2.0-258/spark/lib/spark-assembly-1.6.1.2.4.2.0-258-hadoop2.7.1.2.4.2.0-258.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-api-jdo-3.2.6.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-core-3.2.10.jar

:/usr/hdp/2.4.2.0-258/spark/lib/datanucleus-rdbms-3.2.9.jar

:/etc/hadoop/conf/

-Xms1g

-Xmx1g

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-spark-root-cool-server-name1.log

org.apache.spark.deploy.SparkSubmit

--conf spark.driver.extraClassPath=::/usr/hdp/2.4.2.0-258/zeppelin/local-repo/2BXMTZ239/*

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar

--conf spark.driver.extraJavaOptions=

-Dfile.encoding=UTF-8 -Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-spark-root-cool-server-name1.log

--class org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer /usr/hdp/2.4.2.0-258/zeppelin/interpreter/spark/zeppelin-spark_2.10-0.7.0-SNAPSHOT.jar 60887

</p><p><strong>PROCESS #4</strong>

/usr/java/default/bin/java

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-cassandra-root-cool-server-name1.log

-Xms1024m

-Xmx1024m

-XX:MaxPermSize=512m

-cp ::/usr/hdp/2.4.2.0-258/zeppelin/interpreter/cassandra/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

:

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer

</p><p><strong>PROCESS #5</strong>

/usr/java/default/bin/java

-Dfile.encoding=UTF-8

-Dlog4j.configuration=file:///usr/hdp/2.4.2.0-258/zeppelin/conf/log4j.properties

-Dzeppelin.log.file=/var/log/zeppelin/zeppelin-interpreter-cassandra-root-cool-server-name1.log

-Xms1024m -Xmx1024m -XX:MaxPermSize=512m

-cp ::/usr/hdp/2.4.2.0-258/zeppelin/interpreter/cassandra/*

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/lib/*

::/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-interpreter/target/test-classes

:/usr/hdp/2.4.2.0-258/zeppelin/zeppelin-zengine/target/test-classes org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer </p>

有關此問題和其他與英特爾相關的特性的更多信息有關此問題的兩個問題:當我停止zeppelin進程時,我注意到解釋器進程仍在運行,爲什麼?我應該只使用Zeppelin用戶界面關閉它們嗎?爲什麼劇本不關閉他們?我正在運行。\ zeppelin-daemon stop 爲什麼cassandra解釋器會產生多於一個的進程,如果它是一個解釋器? – chrisse